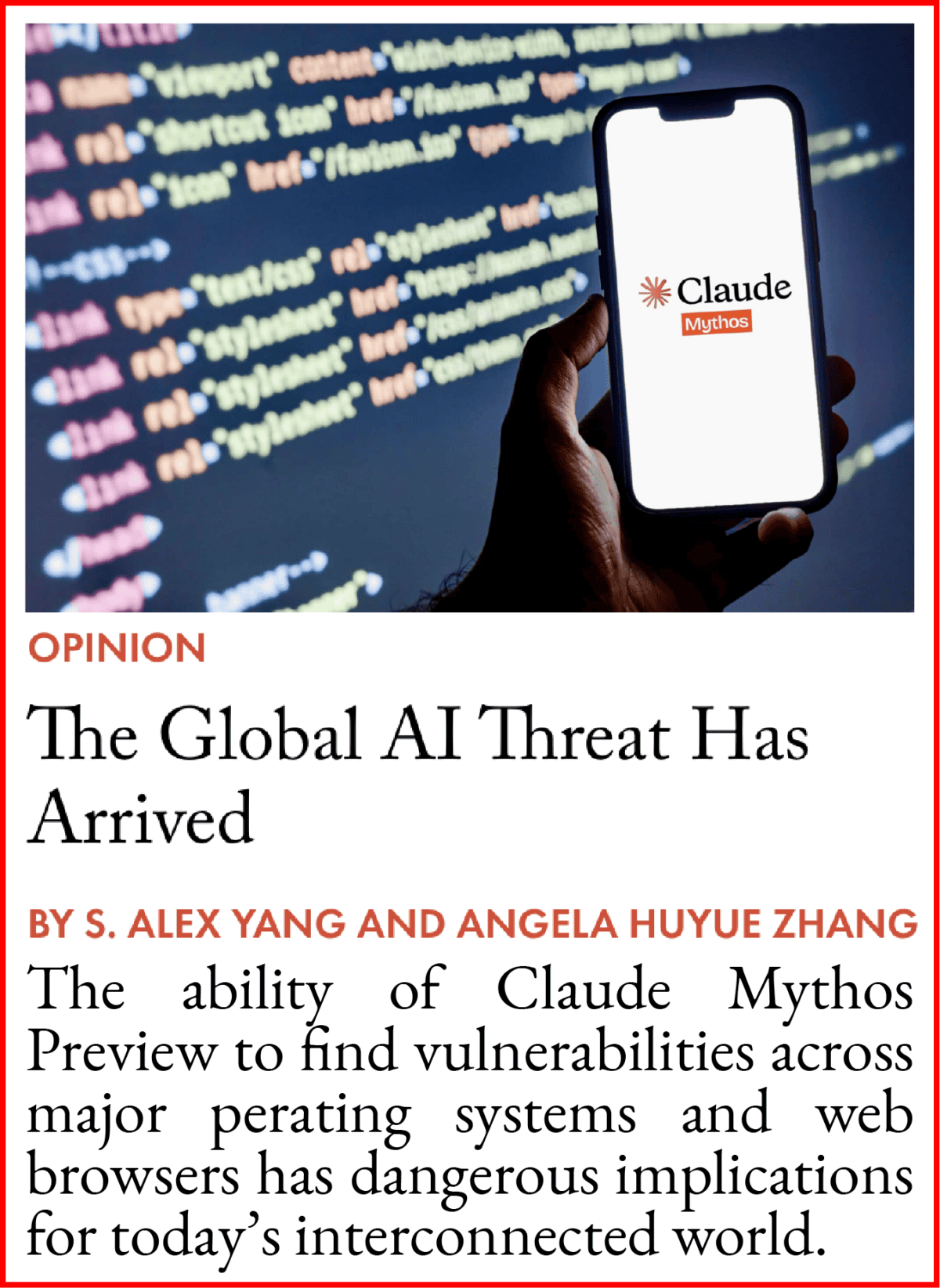

Sebastian Mallaby is a longtime financial journalist and a Fellow for International Economics at the Council on Foreign Relations. He is the author of a new book, The Infinity Machine: Demis Hassabis, DeepMind, and the Quest for Superintelligence, his first about artificial intelligence. After visiting China recently, Mallaby wrote a provocative op-ed in The New York Times arguing that the United States’ landmark export controls on AI semiconductors to China have failed, and that the U.S. and China need to come to the table to strike an agreement on AI safety, as the U.S. and the Soviet Union did on nuclear weapons during the Cold War.

Our conversation took place shortly before The Wall Street Journal reported that the U.S. and China are weighing whether to launch official talks about establishing AI guardrails at the Trump-Xi visit next week. In this lightly edited Q&A, we discussed how his reporting and his visit to China led him to his conclusions, what he thinks Washington has got wrong about China’s approach to AI, and what such an agreement ought to look like.

Illustration by Lauren Crow

Q: You’re a prolific author and have written about many different subjects over time. How did you decide to write about Demis Hassabis and about AI?

A: When I finished my previous book, called The Power Law, about venture capital, I was looking around for the next topic in mid-2022 — before ChatGPT came out. If you were paying attention to tech, as I had been because of my last book, it was pretty clear that AI was gathering a lot of momentum. And in particular, there were two projects from DeepMind, the Google subsidiary founded by Demis Hassabis: the AlphaGo system that defeated the top human players of Go, and the AlphaFold system that predicted all the shapes of proteins in nature — 200 million of them.

What these two projects had in common was that in both cases, the combinatorial space that you had to make sense of was just enormous. Go is a 19 by 19 board, so the first player has a choice of 361 squares in which to place the stone, and the second player has 360 options of how to respond. If you multiply this out, pretty soon you get to a number close to infinity. Hence the idea: the infinity machine. AI is a system that can make sense out of an infinity of permutations. It felt like this was a slightly niche but exciting and high-potential technology area.

I knew Demis Hassabis a bit because I’d been to tech conferences where he’d given talks. I knew he was the original lab leader in this space. He started DeepMind in 2010, much before OpenAI got started — OpenAI is basically a derivative copy of DeepMind. He was a serious scientist who had a PhD in neuroscience, and had deep thoughts about how the invention of machine cognition would change society, and what it would do for scientific exploration. He felt like an interesting figure, partly also because he’s based in London, which made him different from the Silicon Valley people I’d written about before.

So I thought I had a slightly niche topic, but also a great character. I pitched Demis on the idea in November 2022: he agreed. And on the last day of that month, of course, ChatGPT came out. So my niche topic went from the fringe to the mainstream faster than I expected.

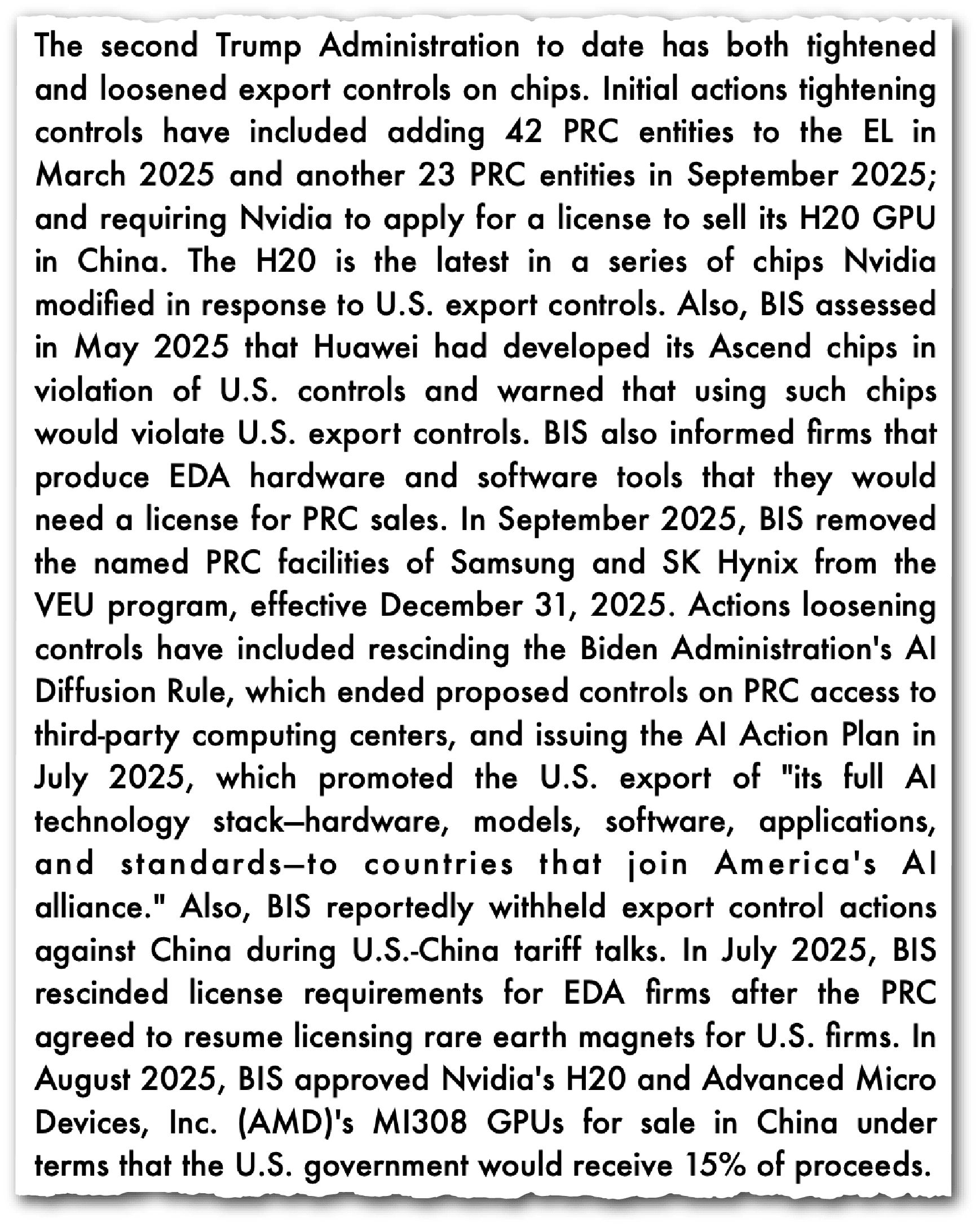

The U.S.’s export controls on semiconductors and chipmaking equipment to China were also announced around that time. How did you get from the book to the argument you recently made in The New York Times that these controls have failed?

The background here is that in January 2023 I wrote a long opinion essay in the Washington Post on why I supported the chip export controls. It seemed plausible that they would stop China from getting frontier AI and would create a big lead for the U.S. — and the strategic upside of that was very big. That was my original position.

| BIO AT A GLANCE | |

|---|---|

| AGE | 62 |

| BIRTHPLACE | London, UK |

| CURRENT TITLE | Paul A. Volcker Senior Fellow for International Economics at the Council on Foreign Relations |

By 2026, as I was preparing for the book launch, China had actually published the Chinese translation first, before the U.S. edition came out. So the publisher in Beijing — a very good independent publishing company called Cheers, founded by a husband and wife team whose mission is to bring foreign writing to Chinese readers — invited me to come and do a book tour in China. So I went to Beijing, Shanghai, Shenzhen, and Hangzhou for eight days. They took me to see the audience for my book, which turned out to be a mixture of academics doing AI research — so I went to AI institutes — and tech companies that were interested in implementing AI.

I went to see Huawei, I went to see Ant Group, I went to see Hikvision. What surprised me on the trip was the way that people brought up AI safety, without me mentioning it. This was contrary to what my friends in the Biden administration, who had implemented the chip controls, had led me to expect.

Their narrative was if they could have talked to China about an AI safety, arms control-type regime, they would have been happy to do that: but in China, there’s no muscle memory of the Cuban Missile Crisis. The notion of catastrophic risk is probably associated in China more with the Cultural Revolution, the Great Leap Forward, stuff that goes wrong because it’s political. Technology, to the contrary, is seen in China as part of the core reason for its successful economic growth story. So why would you be against technology? The story that Washington seemed to be repeating to itself was that people in China don’t care about safety. I went there, and found that they did care about safety.

Now, I’m not claiming that this was an exhaustive survey of all sections of Chinese decision-making. I was there for eight days; maybe I had three or four conversations about AI a day, so 30 or so in total. I’m not pretending to be the last word on this. But I was struck by the total contrast between what I experienced and what I had been led to expect.

| MISCELLANEA | |

|---|---|

| FAVORITE BOOK | Apple in China by Patrick McGee |

| FAVORITE FILM | When We Were Kings |

| PERSONAL HERO | Nelson Mandela |

I stress-tested this impression with different people during the visit. For example, I said to the man who translated my book into Chinese, “It’s interesting, I’m finding people talking about safety.” He said, “Yeah, there is an elite conversation, but don’t kid yourself, there isn’t much willingness in the Communist Party to do anything but accelerate. So be cautious with your view here.”

But even so, it feels to me like the fact that there is an elite conversation is new information to some people in Washington DC. I thought it was worth pursuing that variance of perception, and applying it to the chip export control policy.

To the translator’s point, do the Chinese AI leaders who care about safety have any say in policy? For that matter, do American AI leaders? Just look at how Anthropic was squashed for trying to impose terms and conditions on what the U.S. military can do.

That’s totally fair. There’s obviously a range of views in the U.S. between the government and the private sector, and within the private sector different people think different things too. The same appears to be true in China. I did attend one dinner with multiple people who knew about AI, one of whom was a university president who was in Beijing to attend one of the Two Sessions, as part of the AI expert group advising the meeting. He was part of this dinner in which safety was discussed and he seemed to be taking that seriously.

I’m not pretending that this is a slam dunk — that they all want to do a safety deal with the United States. The AI race dynamic exists on both sides. But there are people in the U.S. government who assert with total confidence that they can’t talk to China because China’s not interested in safety. And maybe people in the Chinese government believe with eternal confidence that they can’t talk to the U.S. because of safety. What I’m saying is that this assertion of a cultural division is wrong. Or at least exaggerated.

What do people like Hassabis think about the prospect of negotiating with China on AI safety? Do they share the U.S. government view that you’ve heard?

It’s complicated in the sense that on the one hand, Demis of course understands that we have a global race dynamic and he doesn’t like that. He fears that we’re going too fast, we don’t have enough time to build in guardrails, do alignment research, make these models safer, maybe restrict, or at least know, who is getting access to them.

China is the competitor bordering on the enemy — because it’s not only competing with us, it’s also taking our intellectual property in various ways… it’s taking IP and using it for both a commercially competitive process and potentially a geopolitically competitive intent.

So I think he would agree with my logic that if you want AI safety — and in fact I quote him in my book saying this — everybody has to sign onto safety. If Chinese labs don’t sign onto safety, it’s not going to make the world safer. So it follows logically that you have to talk to China to get them to consider joining the West in implementing safety rules. Furthermore, Demis suggested the safety summit in 2023 which took place at Bletchley in England, an event to which Chinese delegates were invited.

On the other hand, any leader of any Western lab is highly conscious of the security risk from distillation of models [a process in which AI labs imitate a rival model by querying it millions of times and copying its behavior] and from commercial espionage. So Demis and the other lab leaders are wary of China. Also, if the United States and its allies can’t get a safety deal with China, the second best thing is to be ahead of China. So from Demis’s perspective, it’s not simply that we definitely need to speak to China and do a deal. Yes, that would be the best thing in theory. In practice, can we do it? Not clear.

And in the meantime, if we can’t do it, China is the competitor bordering on the enemy — because it’s not only competing with us, it’s also taking our intellectual property in various ways. I didn’t say stealing because I’m not sure that distillation is stealing; but it’s taking IP and using it for both a commercially competitive process and potentially a geopolitically competitive intent.

So, if I understand it correctly, your argument for why the export controls have failed in 2026 is that the original premise by which they were set up — that China is an adversary on AI that the U.S. must stay ahead of — is shakier than you had once thought. And if China is not an adversary, and there is a realistic chance that we can sit down with them and hash out an AI safety agreement, then it is unnecessary to continue to try and be ahead of them every step of the way. Is that right?

That’s almost correct — I would sequence it just a little bit differently. Given that we are where we are now with chip export controls, even though they’ve been weakened by the Trump administration, I wouldn’t unilaterally give them up. But I would say that it’s essential to try to speak to China about AI risks and to do an AI version of the non-proliferation regime which we had in the Cold War.

Because if you don’t do it with China, then they have labs that will produce very good AI, which will be open weight [a form of open source for AI models]: and we know for certain that criminals and terrorists will get hold of powerful AI and do bad things with it.

This is not speculative. This is for sure.

If there is an open weight version of Mythos [Anthropic’s powerful new model that it says can outperform humans at some hacking and cybersecurity tasks] in the future, we’re in trouble. So we have to try to talk to China. And I think for U.S. policymakers to say, “Well, China’s untrustworthy, they say one thing and then they do the opposite,” is a little ahistorical in the sense that, how reliable was Khrushchev in the Cold War? This is the guy who banged his shoe on the table at the UN, this is the guy who stationed missiles in Cuba. And yet we did deals with the Soviets in that period. The [International Atomic Energy Agency] was created in 1956 and the [Treaty on the Non-Proliferation of Nuclear Weapons] in 1968. Even if you have a rival and a competitor and you don’t trust them totally, non-proliferation is something in which we both have an interest.

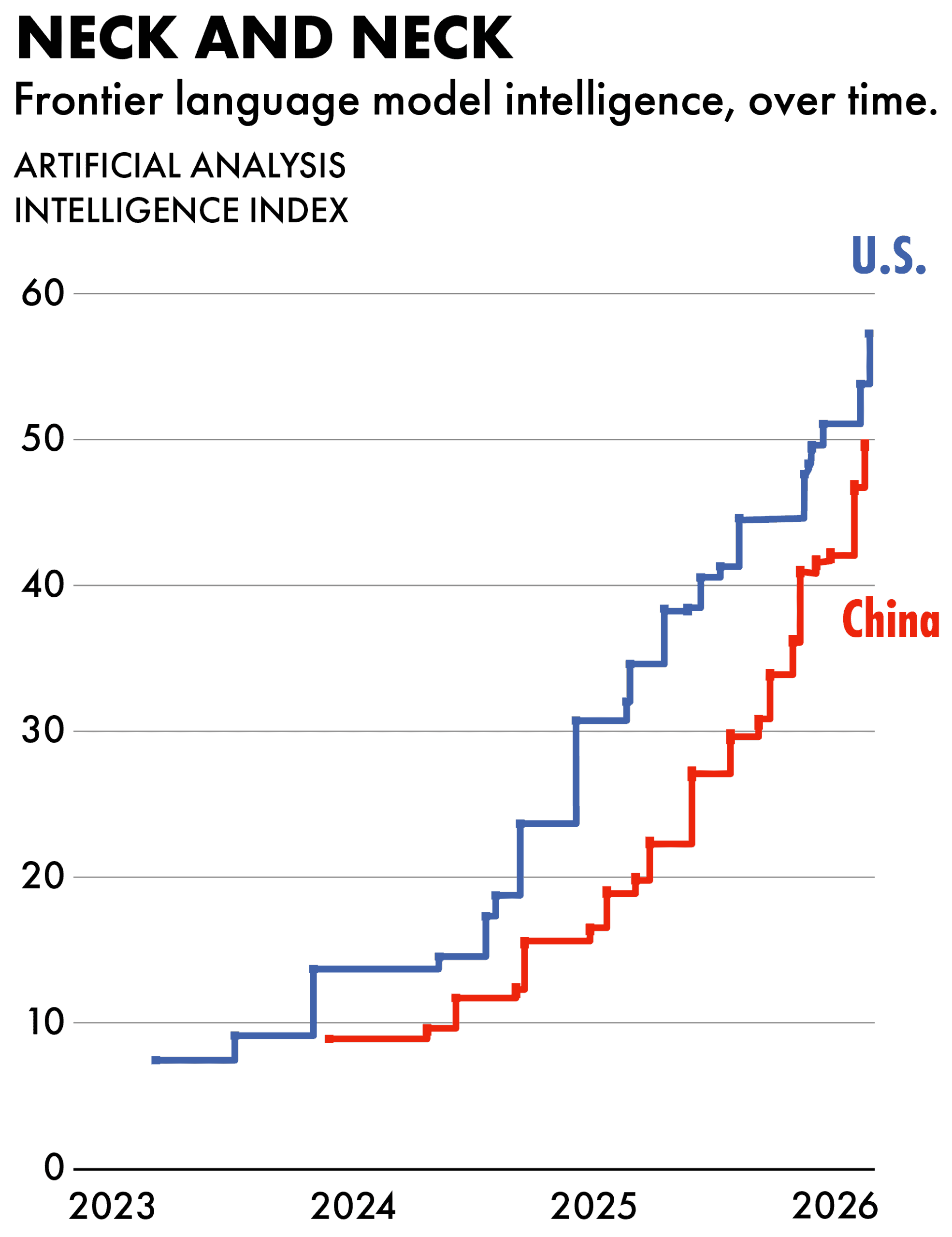

China doesn’t want criminals to do bad stuff with this stuff. They quite like regulating the internet. So we have a shared interest and it’s our duty, given the stakes, to have a shot at negotiating a global nonproliferation agreement where the core is that the U.S. and China have to agree. And if, in the process of getting that deal, we need to offer the sweetener of removing or loosening the chip controls, that would be a trade worth considering. Because I’m not sure what the upside is of the chip controls. It does slow China a bit. But it’s not like it’s stopping them from getting powerful AI. They’re six months behind. That’s it.

To broaden the lens slightly, there are two types of risk when you think about bad guys doing bad stuff with AI. The first kind is China may be a bad guy, China may get the models, China may do bad stuff to us because they are the competitor of the U.S. both commercially and geopolitically — let’s not kid ourselves about that. That was the Biden administration instinct, which I understood and supported in 2022.

…we can talk to [China] whilst competing, and the competition may include keeping the export controls on for the moment… it’s not as if the export controls have prevented them from getting powerful AI. So we shouldn’t view this as something we could never compromise on.

But there’s also a different kind of bad guy who might do bad stuff with AI, which is the criminals and the terrorists. In the Cold War, the way we dealt with those two kinds of problems, with regard to nuclear weapons, is that when it came to U.S.-Soviet competition, there was mutually assured destruction and a balance of deterrence. That dealt with the U.S.-Soviet rivalry.

On the other hand, there was a separate problem that nukes might fall into the hands of terrorists: and we dealt with that through a non-proliferation regime. I’m suggesting we need to do the same thing now. We have two kinds of problems: maybe we deal with the China rivalry through mutually assured destruction and the balance of power, as well as specific things like we had with the Soviets such as how many weapons with nuclear warheads you could have and where they could be stationed. You could imagine some bilateral limits on the arms race.

But whatever you do in that category, don’t take your eye off the separate and alternative threat, which is actually more likely to materialize, which is that AI will be used, as it already has been used in Mexico to pretty bad effect, to do cyberattacks and then maybe in the future to create a bioweapon which will be worse than Covid.

Are AI safety talks and the export controls mutually exclusive? Do you believe that China won’t come to the table unless those are dropped?

You could try to begin talks without dropping them. But put yourself in China’s shoes even just for five minutes: the U.S. has these extremely sweeping export controls — not only can you not have the chips, but you can’t have the chip-making equipment, nor can you have any person with U.S. connections advise you on using chip clusters. It’s quite hard to imagine being on the receiving end of all this, which has deeply disrupted some of their most important companies, and not view this as an act of aggression by America.

Because it was an act of aggression. So it can’t be constructive for bilateral relations in terms of having dialogue. I think we can talk to them whilst competing, and the competition may include keeping the export controls on for the moment. I’m just pointing out that it’s not as if the export controls have prevented them from getting powerful AI. So we shouldn’t view this as something we could never compromise on.

In your op-ed, you mentioned an interesting discussion you had with a Chinese AI leader who said they weren’t sure how much longer they could keep their models open weight. Could you say more about that?

It was one CEO of one company who said this to me. But I’m not sure I can say more than what I said in the piece, because it was on background.

The reason I ask is that if China’s own AI development path is leading it to the conclusion that it’s in their own interests to put their own constraints on AI soon, and to prevent the most advanced models from becoming public, then in some senses bilateral safety talks are unnecessary, aren’t they?

That’s an interesting point. But I would actually turn it around. I would say that it’s quite likely that if Chinese labs have Mythos-type technology in six months’ time, that they will also for their own domestic reasons decide that it threatens the stability of Chinese cyberspace and that they’re going to restrict it. Just like the U.S. has made that decision for its own reasons domestically. That’s why we should talk to them, because it illustrates how we have shared interests.

Now, we would still want to talk to them because even if they were to control Mythos-type technology, it’s not the same as getting to a point where you agree on common safety standards, and also where both countries agree on avoiding spillovers. For example, China might restrict distribution of Mythos within China but potentially be okay with open weight versions of it being exported — because that would destabilize Western internet systems in a way that China might view as a good thing. It’s a big regime shift for China to go from open weight models to proprietary models, which is part of the necessary change that we need to push for.

One of the issues you describe in your book is that Western AI labs have often felt they can’t slow down development to consider the risks of AI, because they’re in a race with China. Do you see a U.S.-China AI safety agreement as creating a window for the labs to slow down development and be more considerate about those risks?

The regime I’m envisaging is a little bit less dependent on norms than you’re implying. If the U.S. and China were to agree on safety standards, the standards would involve, in both countries, governments reviewing models before they’re released, and controlling the manner of the release a bit like the Food and Drug Administration controls the release of medicines.

[On Wednesday, National Economic Council Director Kevin Hassett said in an interview with Fox Business that the White House is considering an executive order that would require AI models to undergo an evaluation process similar to that used by the FDA.]

And if the U.S. and China saw eye to eye on this model of national review of safety that includes not having open weights, everybody else would have to sign up, for the simple reason that there’s no other country in the world that has an independent tech stack.

This whole debate is very interesting because as we get Mythos-type technology, AI is going to get stronger and stronger and we’re going to need to do something about it. But it’s also interesting because it’s genuinely complicated and finely balanced. I’m not pretending to be certain of my position. My position is we should try to talk to China about a deal. I’m not at all certain that we would get the deal. But I think it’s a bad idea not to try.

I debate these things with friends who don’t agree with me, who I respect a lot. Last week I had a debate with Jack Goldsmith of Harvard Law School who is an expert on international treaties and knows a lot about the NPT agreement. His perspective is that it’s not a terrific analogy because with nuclear weapons, you know what a weapon is; yes, there are civilian nuclear reactors, but those look fundamentally different. Whereas with AI the dual use is much more subtle and messier, so it’s harder to have a treaty that defines what unacceptable uses are.

I also have friends who have been in government who say, “Look, we’ve tried talking to China, it doesn’t work, they’re totally untrustworthy, we’ll just be taken advantage of if we do that.” Chris McGuire is one of these friends, my colleague at the Council on Foreign Relations who was in the White House in the National Security Council dealing with chip export controls. An absolutely fabulously smart guy, I really respect him. And we don’t agree.

He says to me, Look, you may be right that China has decently powerful AI for now, but their [lack of] access to chips is going to bite more as compute becomes even more complex and larger; and this advantage that the U.S. has in depriving China of access to top quality compute will grow in importance over time. So we shouldn’t give up on this policy because it is going to widen the U.S. lead in terms of frontier AI models. And if distillation becomes harder for China because model release is more restricted, that will increase the logic in favor of the chip export controls.

What I totally stand by is the idea that when I went to China, I saw something which was at variance with what a lot of people assume in Washington. The atrophy in travel back and forth to China since Covid has maybe reduced the information flow. I also stand by the idea that, with Mythos, we don’t have an alternative but to try to talk to them. It would be crazy not to make a more concerted effort than in the past. But I don’t pretend to have a monopoly on the truth of how this will play out in the next year.

Eliot Chen is a Toronto-based staff writer at The Wire. Previously, he was a researcher at the Center for Strategic and International Studies’ Human Rights Initiative and MacroPolo. @eliotcxchen