China’s latest artificial intelligence obsession is OpenClaw, an AI agent that doesn’t just answer queries like a chatbot, but can also control a computer and carry out tasks from managing social media accounts to monitoring stocks. But like all obsessions, there is a risk that things could turn sour.

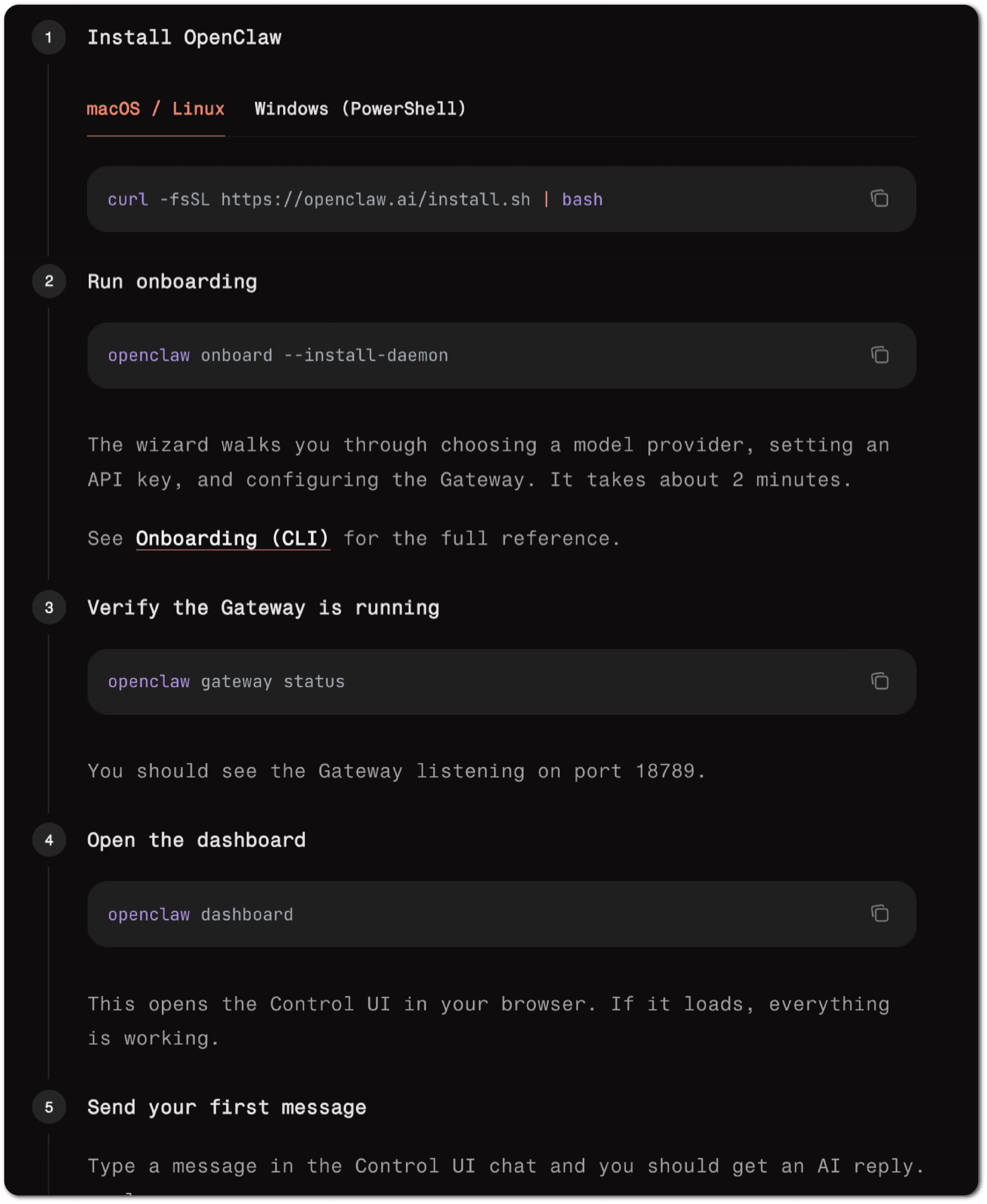

From Tencent to MiniMax, dozens of Chinese tech companies have released their own clawlike tools in the past few weeks, often by integrating OpenClaw — an open source software created by Austrian developer Peter Steinberger whose underlying code is accessible to any user.

Enthralled by the prospect of virtual assistants that can handle digital tasks autonomously, individuals from retirees to housewives have jumped on the bandwagon, forming long lines at pop-up events hosted by Chinese tech firms to get OpenClaw — described by the Nvidia boss Jensen Huang as “the next ChatGPT” — installed on their devices.

Already though, a backlash against OpenClaw — or ‘lobster’ as it is nicknamed in China — has begun. Some Chinese users have reported that AI agents they installed based on the software have handed over important data to strangers, including sensitive personal information, company financials and IP addresses. Others have racked up hefty bills after keeping their AI agents running in the background on their computers.

One consultant in Shanghai, surnamed Luo, told The Wire China he tried out QClaw, a tool Tencent has developed so that users can deploy OpenClaw and send it orders via WeChat. When he instructed QClaw to organize his files into two folders, the tool permanently erased dozens of documents, including reports he had written for his clients. “I will not use anything that has to be installed locally and I will not let AI touch my work computer again,” he says.

The growing number of similar reports from ‘lobster victims’ about problems with OpenClaw highlights an issue for the Chinese authorities. Eager to reap the economic benefits of AI, Beijing is striving to lead the shift from chatbots to agentic AI. But it also faces pressure to protect consumers and prepare them for the risks that can come with AI software, without stymieing the technology’s advance.

“A lot of the conversations Chinese regulators had post-2022 and ChatGPT is how to balance [regulation] with the need to stay competitive innovation wise,” says Kristy Loke, a fellow at MATS Research who focuses on China’s AI governance. “This is very much a test case of how well that works.”

China is counting on agentic AI as a long-term growth driver, hoping that it can turbocharge productivity and fill labor gaps. The central government has set an ambitious goal for the deployment of AI agents, aiming to reach a penetration rate of over 70 percent by 2027 in sectors such as healthcare and manufacturing, measured by the number of enterprises that have deployed AI agents in their workflows.

“China’s core strategy is diffusion of AI models and applications,” says Brian Tse, founder of Concordia AI, a Beijing-based social enterprise that promotes global safety around the technology. “There is a very proactive stance when it comes to deploying AI agents throughout different parts of the economy, from financial services to manufacturing.”

The rising use of agentic tools is reflected in China’s consumption of tokens, a unit that measures data processed by AI models. Daily token usage in the country, a metric for AI adoption, increased from 100 trillion at the end of 2025 to 140 trillion this month, the National Data Administration revealed this week — other countries have yet to publish similar data.

Large language models predict the next word’s probability distribution, so there’s always a small chance that it will pick the wrong answer. As an autonomous agent that runs in the background even while you sleep, OpenClaw will turn these wrong words into action.

Tiezhen Wang, head of APAC Ecosystem at the developer platform Hugging Face

Alibaba chairman Joe Tsai is the latest top tech executive to rave about the future of AI agents. Speaking at a summit run by German firm Siemens in Beijing on Tuesday, he recounted how his colleague had recently built four AI agents to create a synthetic tech influencer on social media: one agent screens the news to identify trends, another develops the thesis, and two are responsible for the writing and editing.

“Basically he’s created four virtual employees,” Tsai told the audience, adding that he expects AI agents to disrupt — if not replace — a vast swathe of white-collar jobs.

OpenClaw’s rapid growth in China has been spurred along by the fact that Chinese consumers are generally more optimistic about AI than their foreign peers, and more eager to try new technologies, according to survey results cited in Stanford University’s AI Index Report. On the flipside, they have to date appeared less concerned about AI safety.

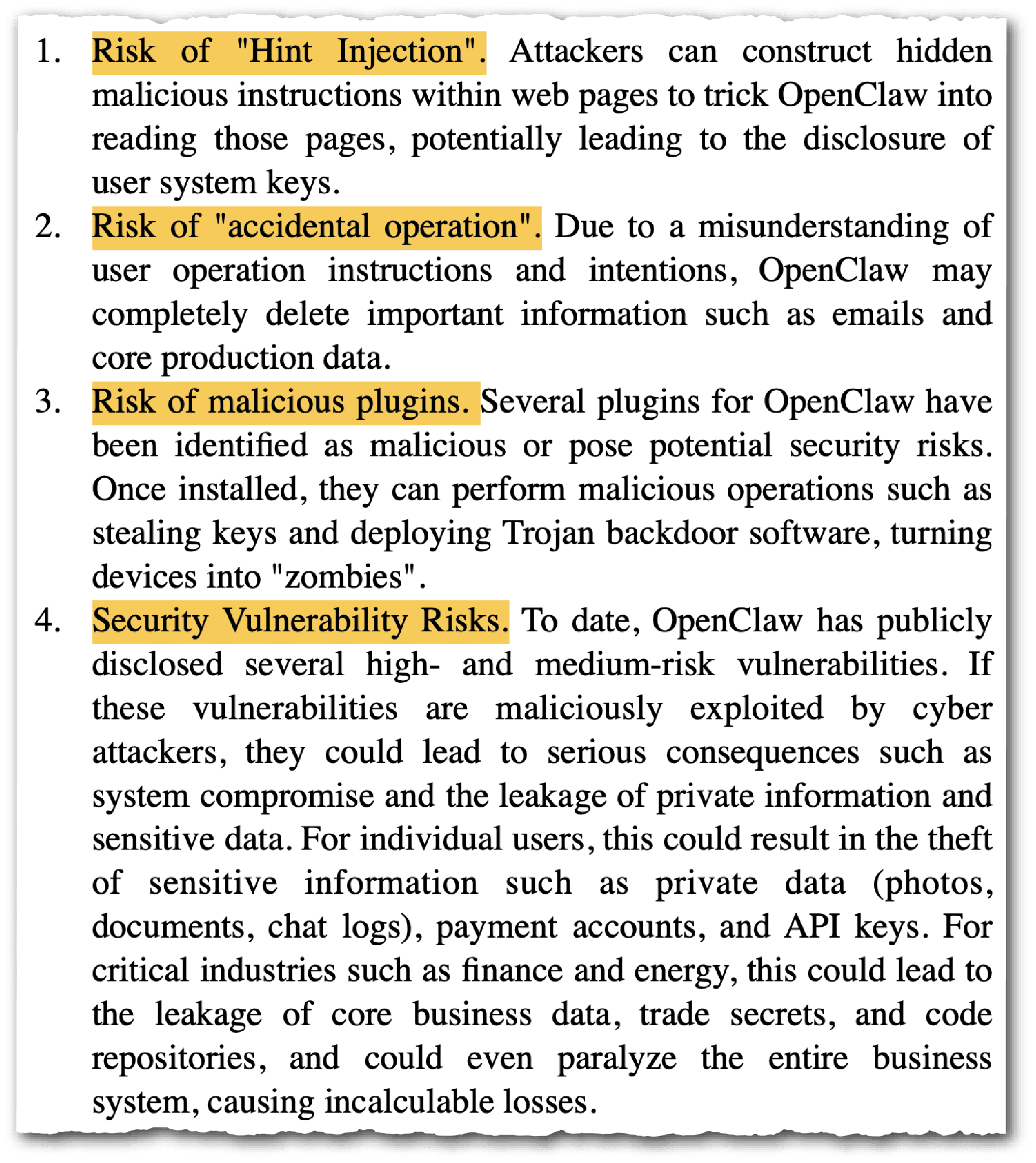

“The very features that make OpenClaw so functional also make it potentially dangerous in many ways,” says Gabriel Wagner, a part-time researcher at Concordia AI. For example, for the software to work, users have to grant it broad access to their computer system so it can control the device, and then install plugins termed ‘skills’, so that it can carry out different tasks. Last month, researchers from Snyk, a Boston-based cybersecurity firm, found that 13 percent of the skills on ClawHub and skills.sh — two popular online platforms for these extensions — contain critical-level security issues, such as malware.

The Chinese authorities are starting to share such worries. The country’s National Cyber Security Emergency Response Team (CNCERT), a government agency under the cyberspace regulator, highlighted four hazards with OpenClaw earlier this month. They include operational errors, where the agent software misinterprets user instructions, and the installation of malicious plugins, which can steal data.

Last week, the Ministry of State Security expressed further concerns about the wider societal impacts of OpenClaw, warning that it can be hijacked to spread disinformation on social media and commit fraud. It further noted that malicious plugins are much harder to detect than traditional malware.

Besides the cybersecurity vulnerabilities associated with OpenClaw and its ecosystem, an AI agent could also amplify the fundamental flaws of AI models such as so-called ‘hallucinations’ in which they present incorrect information as facts, says Tiezhen Wang, head of APAC Ecosystem at the developer platform Hugging Face.

“Large language models predict the next word’s probability distribution, so there’s always a small chance that it will pick the wrong answer,” says Wang. “As an autonomous agent that runs in the background even while you sleep, OpenClaw will turn these wrong words into action.”

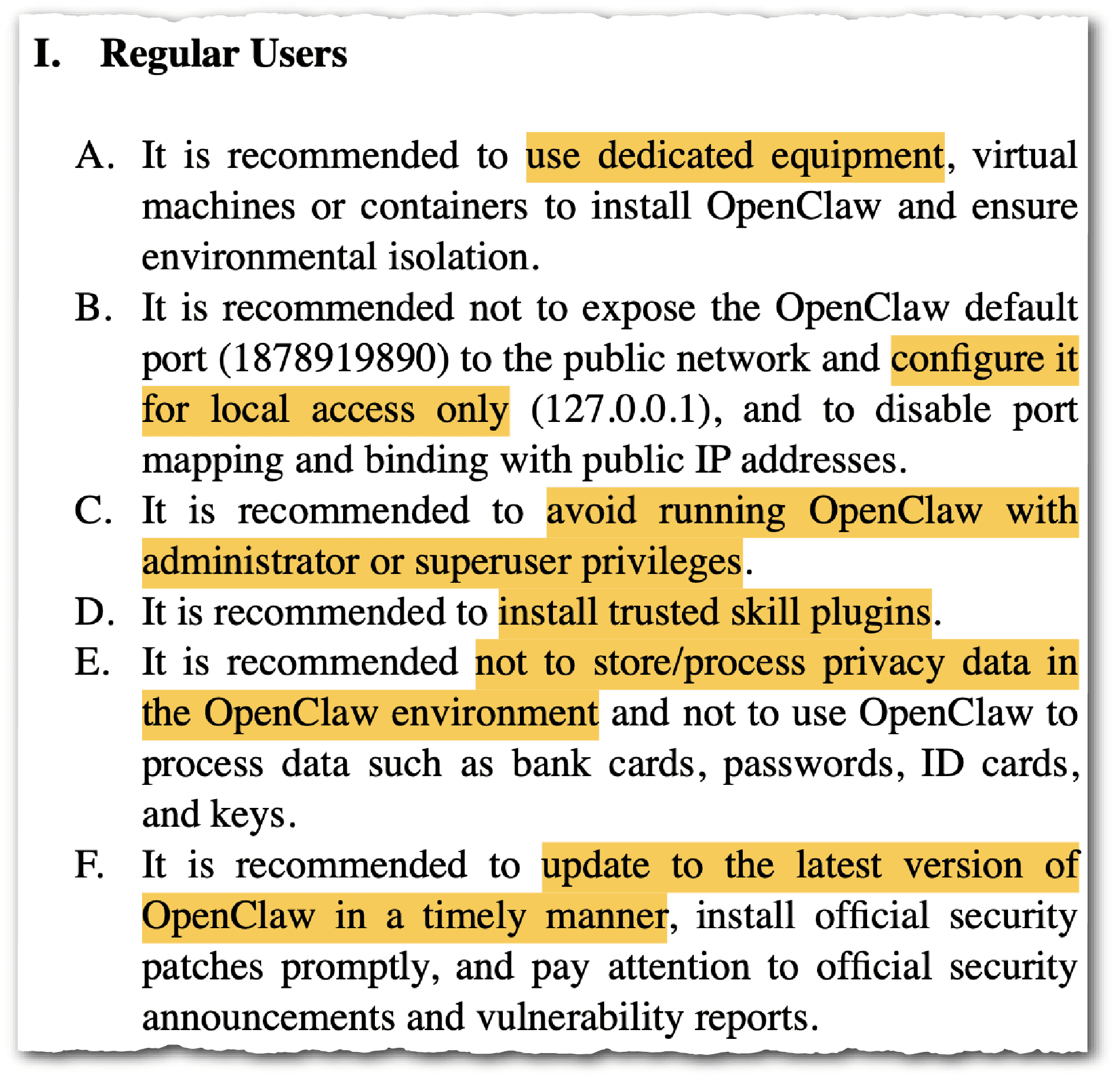

Wary of such perils, the Chinese government is trying to get users up to speed on the dos and don’ts around agentic AI. On Monday, the country’s cyberspace authorities jointly published a list of best practices for individual users, companies, cloud providers and tech enthusiasts. Companies, for instance, should make sure humans have oversight over high-risk actions, the guidelines say. The regulators have already banned employees of state-owned enterprises and government agencies from deploying OpenClaw.

China has in general moved faster than other countries in terms of AI governance and legislation. The authorities there are currently drafting industry and national standards, including a security framework for AI agents, according to Wagner of Concordia AI.

One governance mechanism that could be introduced is issuing IDs for AI agents, so they can be traced to their owners so that whoever deploys them can be held accountable for their actions.

Introducing such rules involves striking a delicate balance. “It may slow down development because people may have to spend more time dealing with compliance,” says Wang. “On the other hand, regulation always lags behind technological development, so it may never be enough.”

Rachel Cheung is a staff writer for The Wire China based in Hong Kong. She previously worked at VICE World News and South China Morning Post, where she won a SOPA Award for Excellence in Arts and Culture Reporting. Her work has appeared in The Washington Post, Los Angeles Times, Columbia Journalism Review and The Atlantic, among other outlets.