As artificial intelligence continues its rapid development, calls from both within and outside the industry for countries to build a global system of oversight to check its risks are becoming more urgent. A recent move by the U.S. government to blacklist a Chinese research institute could make such cooperation even harder.

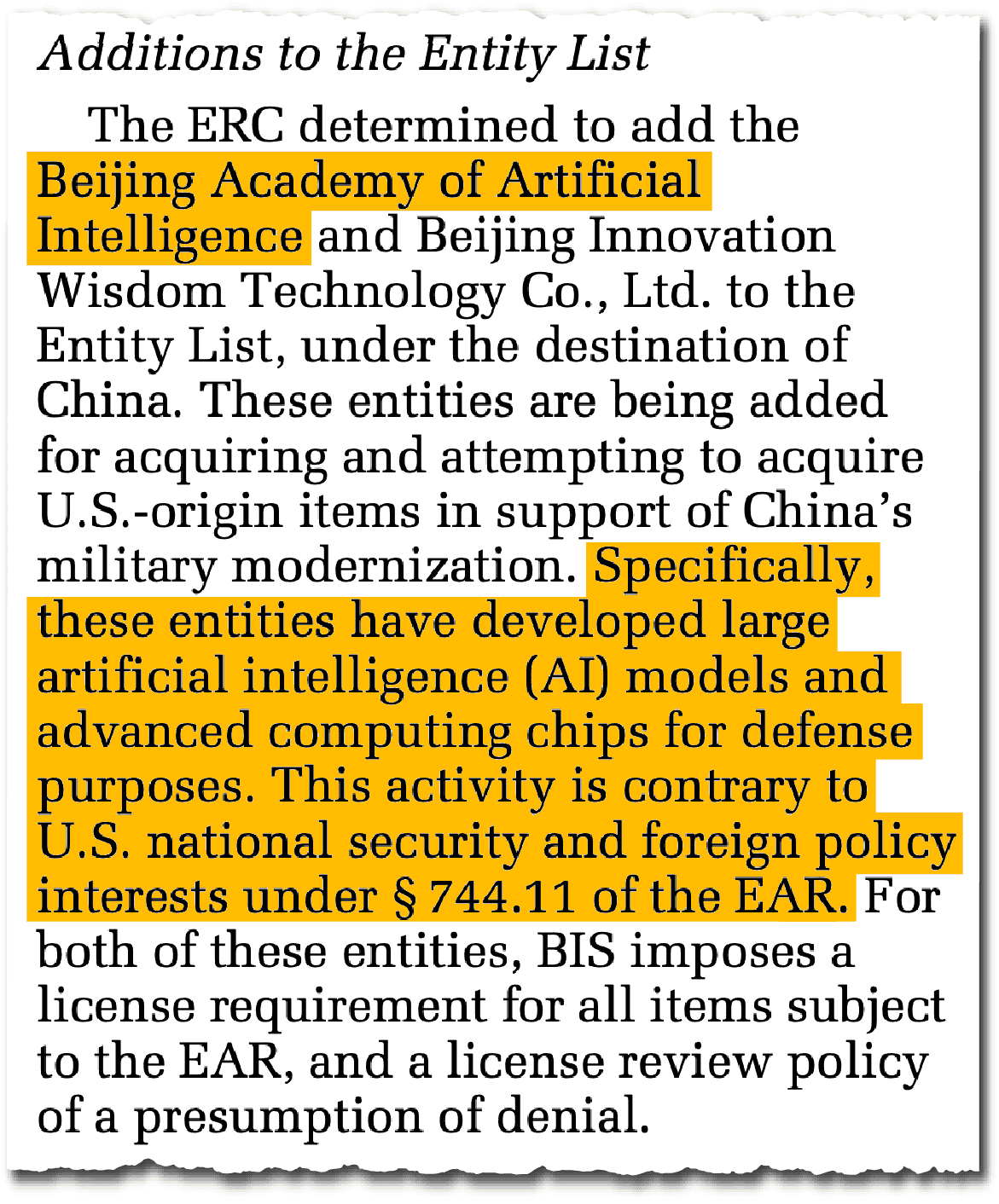

Late last month, the Trump administration added the Beijing Academy of Artificial Intelligence (BAAI) to its Entity List, which restricts exports based on national security concerns. The move is part of a new salvo of restrictions from the Commerce Department aimed at hindering China’s artificial intelligence and advanced computing ambitions.

BAAI’s inclusion in the list has drawn few headlines: But it could have far-reaching consequences. The academy is one of a handful of Chinese entities that have already been involved in international talks on AI’s risks. It’s also a member of a key Chinese industry association vying for representation at international AI safety summits.

The U.S.’s move against BAAI could now reduce its western counterparts’ willingness to participate in meetings where the academy is involved. That’s because Washington’s export controls limit not only listed entities’ access to U.S. hardware, but the ability of American groups to engage with them on technical issues.

That, in turn, could make life much harder for western scientists and software engineers to work with China on tackling AI’s harms, from systems that can replicate or deceive their creators, to models that could enable the building of weapons of mass destruction.

Leading AI researchers, including some who pioneered this technology, have repeatedly stressed the importance of getting major powers rowing in the same direction on regulation. Excluding China, widely regarded as the world’s second leading AI power, would create a gaping hole in any accord designed to protect against AI’s risks.

“There’s a real hindrance risk to knowledge sharing,” says Huw Roberts, a researcher at the University of Oxford’s Internet Institute, who studies China’s AI governance. The listing, he says, “adds more ambiguity to different forms of engagement with a key international-facing [organization] in China, and by extension China’s AI safety network.”

Experts on China’s AI ecosystem describe BAAI as performing a jack-of-all-trades role since it was founded in 2018. The non-profit research institute, funded by the Ministry of Science and Technology and Beijing’s municipal government, produces open-source large language models akin to DeepSeek or Meta’s Llama.

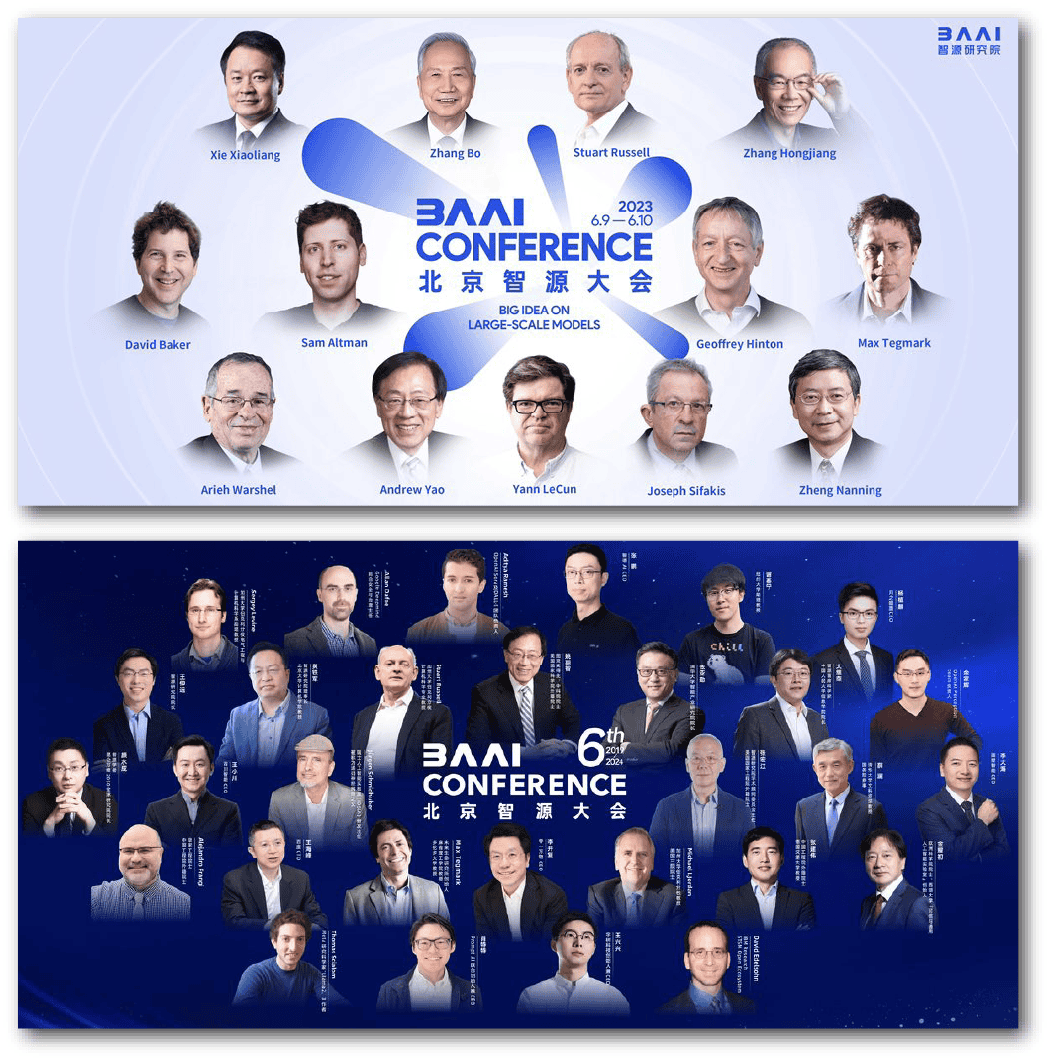

It’s also involved in AI safety research and standard setting: the annual BAAI Conference is considered China’s most prestigious industry gathering, and in the past has drawn figures including OpenAI founder Sam Altman as well as top researchers from U.S. AI firms such as DeepMind and Anthropic.

Four people in The Wire’s recent Who’s Who of China’s AI industry are either currently or previously affiliated with BAAI: almost all of them also have historical ties to U.S. entities. Wang Zhongyuan, BAAI’s president, was formerly a researcher at Microsoft Research Asia and Facebook. Zhu Songchun, a Peking University professor and BAAI director, previously spent 14 years at the University of California, Los Angeles and received tens of millions of dollars worth of federal research grants before moving back to China in 2020.

It’s not guaranteed that having a Chinese AISI means they’ll be granted a seat at the table. They could be excluded for all kinds of reasons, and that may contribute as well to the reluctance to set up an AISI.

Caroline Meinhardt, an AI policy researcher who studies China’s AI governance

“BAAI has taken on an international cooperation role, trying to foster exchanges on AI safety,” says Caroline Meinhardt, an AI policy researcher who studies China’s AI governance. When the academy hosted a dialogue on AI safety in Beijing last year, participants included the Nobel prize winner Geoffrey Hinton and celebrated Chinese computer scientist Andrew Yao.

Those functions have made BAAI an influential interlocutor while China hashes out the sticky politics around who to send to represent the country at international AI safety talks.

Since 2023, a handful of countries have formed government-endorsed AI safety institutes, a new type of structure intended to centralize safety research and policymaking around the technology. The U.S. and U.K. were the first to create AI safety institutes (referred to as ‘AISIs’) in the fall of 2023, while the EU, Japan, Singapore, France and Canada have since followed suit. In November, the countries formally launched an international network of AISIs at a summit in San Francisco, with the U.S. acting as chair.

Conspicuously absent, however, was China, which has yet to set up its own AISI. Then-Commerce Secretary Gina Raimondo remarked on the difficulty of figuring out who to invite from China, telling the Associated Press: “we’re still trying to figure out exactly who else might come [from China] in terms of scientists.”

China’s Most Promising AI Safety Institute Counterparts

| Institution | Description | Location | Specialization | Technical research and evaluations | Standards | International cooperation |

|---|---|---|---|---|---|---|

| China Academy for Information and Communications Technology | Think tank within the Ministry of Industry and Information Technology | Beijing | Evaluations | ✓ | ✓ | ✓ |

| Shanghai AI Lab | Government-backed research institute with over a dozen cooperation agreements with Chinese universities | Shanghai | Technical research and evaluations and international cooperation | ✓ | ✓ | ✓ |

| Institute for AI International Governance | Policy-focused research institute under Tsinghua University | Beijing | International cooperation | ✓ | ||

| Beijing Academy of Artificial Intelligence | Research institute backed by the Ministry of Science and Technology and Beijing’s municipal government | Beijing | International cooperation | ✓ | ✓ | ✓ |

“There’s a very strong desire in China to be part of these global conversations and have a proper seat at the table,” says Meinhardt. The San Francisco meeting, she says, “created a lot of chatter in China about whether it should have a national AISI.”

China’s response finally came in January, when it announced the creation of an “AI Safety and Development Association” composed of a consortium of Chinese players, including BAAI, Peking and Tsinghua universities and five other groups. But the association has yet to appoint a leader, a delay that three people close to the member groups chalk up to ongoing jockeying among the members.

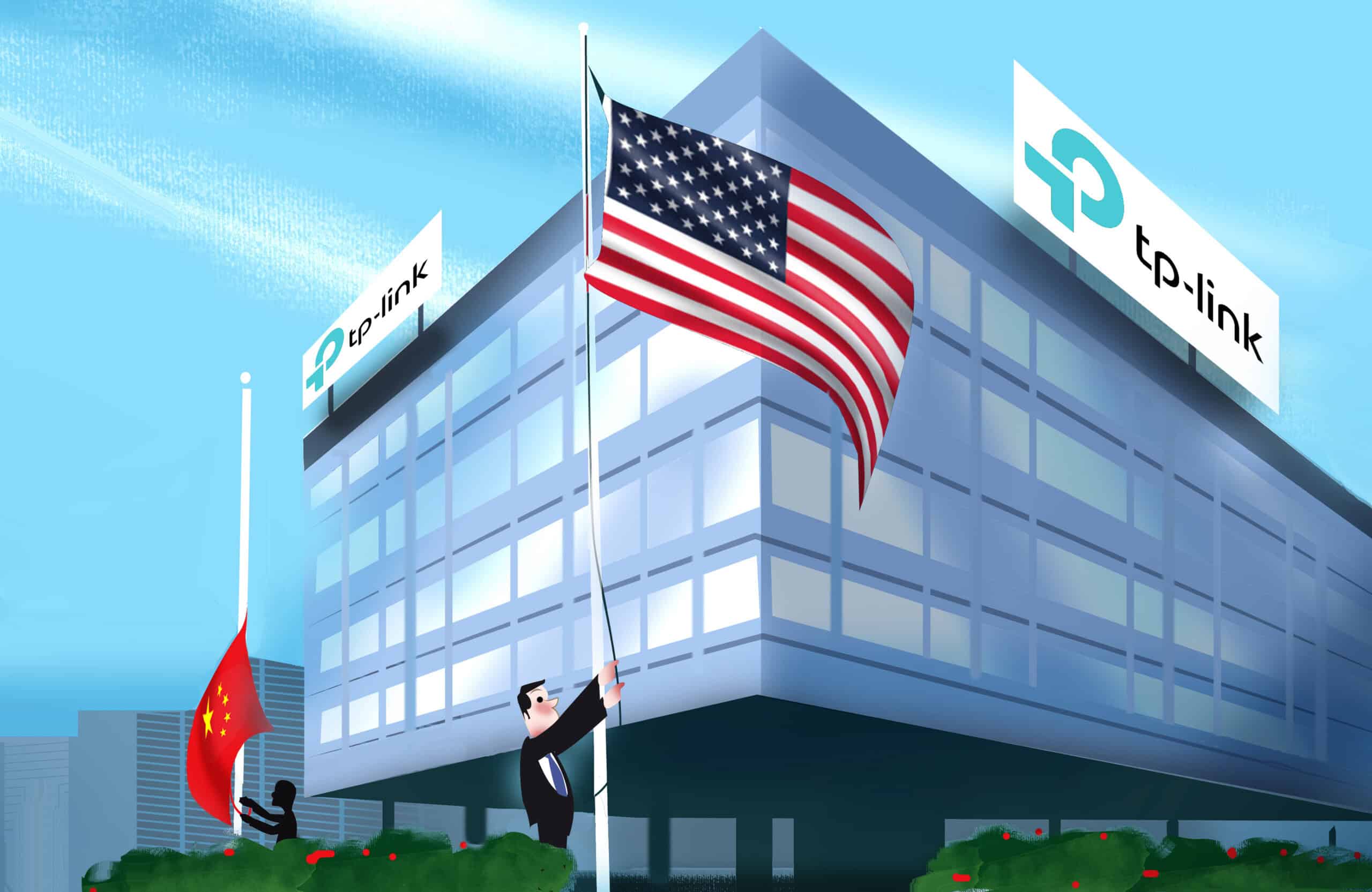

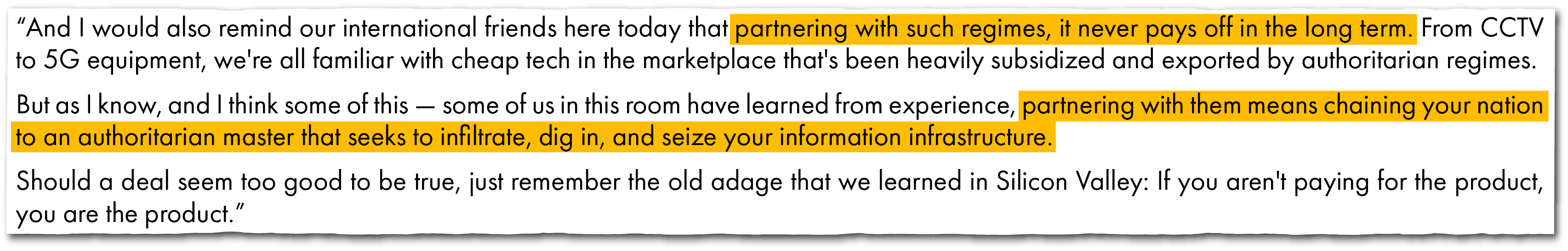

Even if the association does hash out its leadership, it may not now be welcomed into the U.S.-led AISI network. At a recent AI summit in Paris, U.S. Vice President JD Vance warned against cooperation with China on AI regulation: “partnering with them means chaining your nation to an authoritarian master that seeks to infiltrate, dig in, and seize your information infrastructure.”

“It’s not guaranteed that having a Chinese AISI means they’ll be granted a seat at the table,” says Meinhardt. “They could be excluded for all kinds of reasons, and that may contribute as well to the reluctance to set up an AISI.”

BAAI’s blacklisting could prove a serious deterrent for other countries from engaging with China’s new AI safety association.

“I’ve heard from colleagues who have tried to organize events involving U.S. companies that say they don’t want to be involved in events with Chinese companies who are on the Entity List,” says Roberts.

Exactly why the U.S. authorities have targeted BAAI isn’t clear. In a two-line justification, the Commerce Department cited BAAI’s alleged work in developing AI models for defense purposes in support of China’s military modernization.

The Commerce Department and BAAI did not respond to requests for comment.

Observers say that may have to do with BAAI’s work with a Chinese military-affiliated entity known as Peng Cheng Lab. In 2021, Huang Tiejun, BAAI’s dean, co-authored an influential paper with Gao Wen, Peng Cheng Lab’s director, and five other researchers, outlining how highly capable AI systems could escape human control, and potentially turn against their human creators.

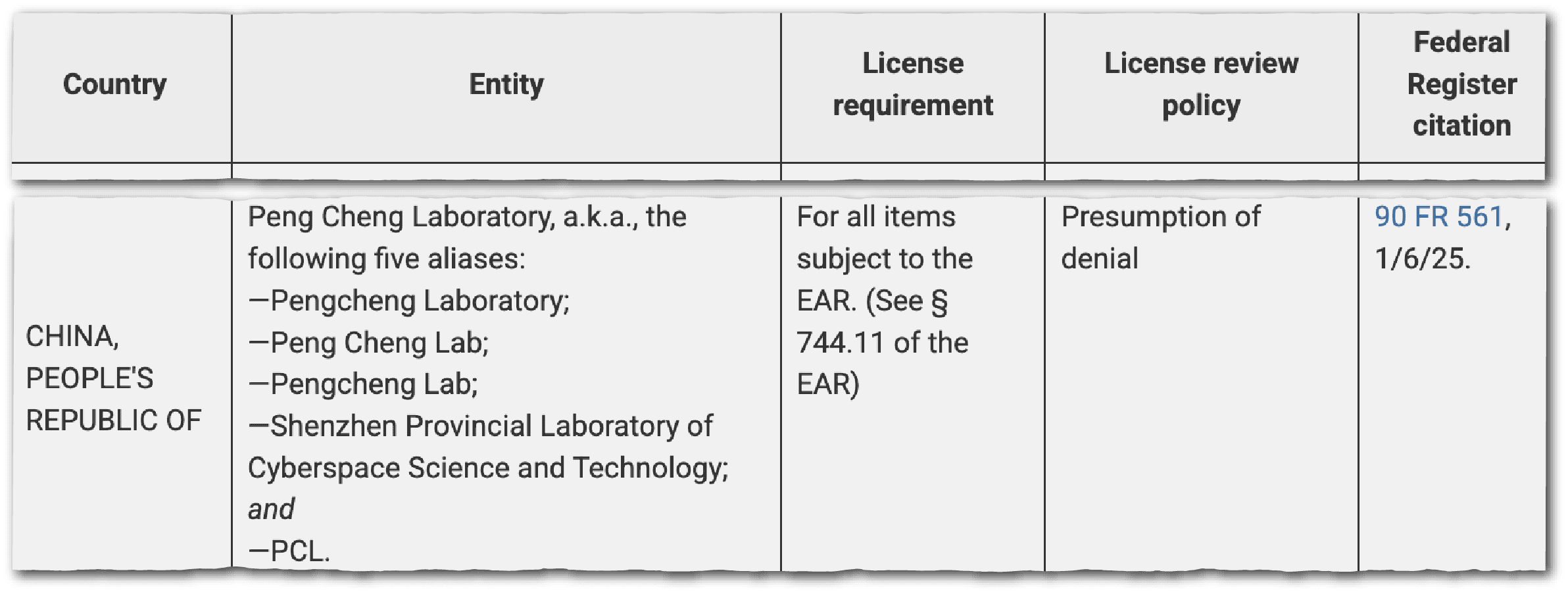

Peng Cheng Lab was itself added to Commerce’s entity list in January. The Shenzhen-based lab collaborates with China’s National University of Defense Technology and is one of the country’s main “cyber ranges,” training individuals in cybersecurity and hacking.

“It raises an interesting question of whether co-authorship of a few research papers [with a military-affiliated group] is enough justification for a company to be included on a trade blacklist,” says Rebecca Arcesati, lead analyst at the Mercator Institute for China Studies, a European China think tank. “That opens a very big can of worms because a lot of universities in China have these kinds of research collaborations.”

Eliot Chen is a Toronto-based staff writer at The Wire. Previously, he was a researcher at the Center for Strategic and International Studies’ Human Rights Initiative and MacroPolo. @eliotcxchen